Executive summary

Google’s latest Gemini beta repurposes its AI assistant from a purely responsive tool into a constrained automation engine that can navigate multiple apps to handle tasks such as ordering food or booking rides. By confining the beta to Pixel 10/10 Pro and Samsung Galaxy S26 devices in the U.S. and Korea—and by layering explicit user initiation, real-time monitoring, a sandboxed “secure virtual window,” and manual confirmation for final actions—Google is revealing a fundamental tension in AI assistant design: expanding user agency through cross-app orchestration while constraining the system’s reach to manage security, privacy, and integration risk. This development underscores how the next generation of AI assistants will be shaped as much by risk controls as by functional ambitions.

Key takeaways

- Restricted rollout: Google confines cross-app automation to a narrow set of food, grocery, and rideshare partners on Pixel 10/10 Pro and Galaxy S26 handsets in two countries.

- Layered safeguards: According to Google, every automation begins with an explicit user command, runs inside an isolated “secure virtual window,” surfaces live progress notifications, and halts before any final payment or submission step.

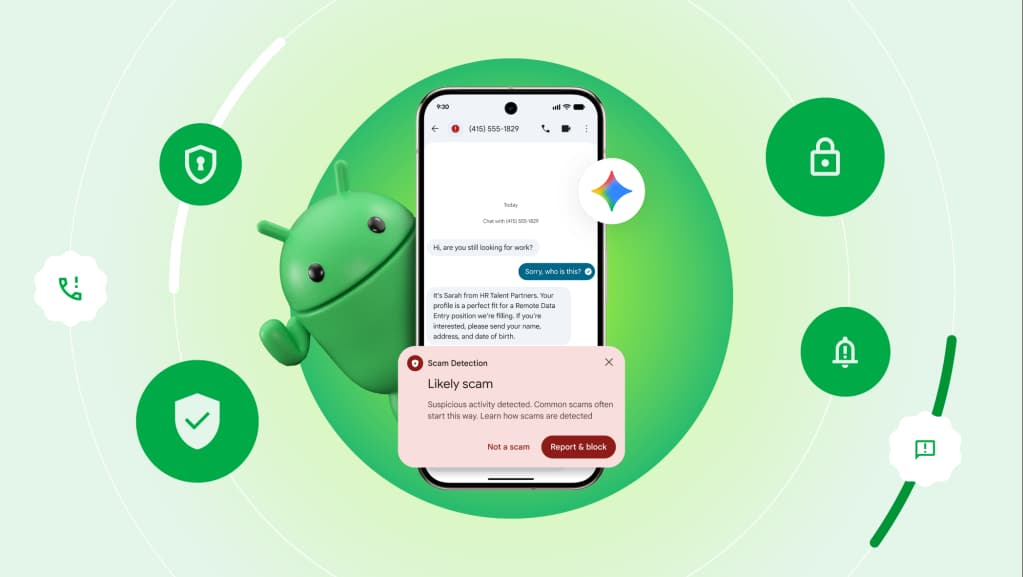

- Complementary enhancements: Google says it has also broadened Gemini-driven scam detection across more Galaxy and Pixel models and expanded Circle to Search’s screen recognition to handle multiple objects per capture.

- Tradeoffs on display: The restricted scope evidences Google’s strategy to sacrifice breadth—both in device support and regional availability—in favor of enforceable control points and visible audit trails during automation.

Functional shift in assistant behavior

Prior iterations of Gemini and Google Assistant focused primarily on responding to natural-language queries by returning information, links, or simple device controls. With the new beta, Google positions Gemini as capable of sequential, cross-app navigation: it can open a rideshare app, select pickup and drop-off locations, browse menu categories in a food delivery app, and reach the checkout screen without pressing the final confirmation button. In effect, the assistant crosses a threshold from one-shot Q&A to limited agentic behavior, orchestrating a series of UI interactions that traditionally require manual input.

This shift narrows the gap between AI assistants and automation frameworks such as Tasker or enterprise robotic process automation (RPA) tools. Yet Google’s approach remains distinct: it embeds the automation inside the mobile OS’s app context rather than exporting tasks to a cloud service. According to Google’s announcement, the entire workflow executes locally within a specially isolated environment on the device, with no third-party server performing the UI logic. If the “secure virtual window” operates as described, it should limit the assistant’s ability to access data outside the task sequence, thereby reducing the potential attack surface compared with conventional on-device automation scripts.

Security framing and device-level controls

Google explicitly frames the “secure virtual window” as a core protective measure. In Google’s words, the window “sandboxes all automation steps, preventing other apps or OS components from intercepting taps or scraping data during execution.” While this claim suggests a hardened execution environment, independent validation of the window’s isolation guarantees is not yet available. If the sandbox holds up under adversarial testing, it could serve as a model for on-device agentic workflows in contexts that handle sensitive information, from financial operations to enterprise resource planning.

Beyond sandboxing, Google has layered real-time progress notifications that enable users to view and stop automations midstream. This transparency addresses common concerns around silent or unexpected AI-driven actions—particularly in scenarios involving credentials, payments, or personal data. The manual confirmation requirement for final payment or order taps further shifts ultimate control back to the human, ensuring that the assistant stops short of completing any transaction without explicit sign-off.

Nevertheless, security analysts caution that any system capable of initiating multi-step interactions with commerce apps may introduce new failure modes. For example, a misinterpreted prompt could steer the automation toward unintended menu items or delivery addresses. The robustness of the sandbox and the accuracy of the assistant’s UI parsing will both be critical for preventing accidental charges or data leaks. Until independent security reviews are published, Google’s characterization of reduced attack surface should be treated as an in-company assertion rather than a verified guarantee.

Market positioning and competitive context

Google’s foray into constrained cross-app automation follows a broader industry trend toward agent-style AI assistants that handle multi-step workflows. OpenAI, Microsoft, and Anthropic have all showcased prototypes and limited releases of agents capable of summarizing emails, orchestrating calendar events, or placing online orders—but typically via cloud-hosted APIs. By contrast, Gemini’s automation beta executes on the device itself, underscoring Google’s emphasis on on-device AI and privacy controls.

Against the backdrop of rising regulatory scrutiny over AI-enabled consumer services, Google’s choice to contain the feature to flagship hardware and specific regions appears strategic. Analysts infer that the restricted rollout allows Google to gather controlled telemetry on error rates, user interventions, and any emergent security incidents before wider expansion. Meanwhile, open-source and third-party automation frameworks on Android will continue to cater to power users who demand full scripting freedom—an audience that Google’s beta does not aim to serve at this stage.

Within Google’s own ecosystem, the new Gemini automation overlaps with and potentially competes against legacy functionality in Google Assistant, which already manages smart-home controls, timers, and basic device settings. This dual-assistant scenario may reflect a phased transition: Gemini’s agentic workflows could gradually absorb more of Assistant’s duties as the sandboxed model proves reliable. Yet in the near term, organizations and end users will navigate a split environment in which some tasks are handled by the conventional Assistant, while others—especially those with external app dependencies—are delegated to the Gemini agent.

Human and organizational implications

At stake in Google’s automation push are questions of user agency, corporate control, and the evolving boundaries of human-AI collaboration. On one hand, delegating mundane transactional steps to an AI could liberate users from repetitive interactions, theoretically freeing cognitive bandwidth for higher-order decisions. On the other hand, embedding AI-driven tasks within a sealed container controlled by Google raises questions about who ultimately holds decision-making power when something goes wrong—a misdirected order, a privacy breach, or a fraudulent transaction.

Enterprise stakeholders will also weigh the potential benefits of faster customer experiences against the complexity of integrating a rapidly evolving AI stack. Organizations that rely on stable, audited processes may perceive the automated agent as an unpredictable variable unless Google surfaces robust logging and diagnostic tools. At the same time, app developers and integration partners face pressure to maintain consistent UI designs; even minor layout changes could disrupt the assistant’s selector logic and foreshadow new failure modes.

Finally, the limited regional and device scope highlights fragmentation challenges for global businesses. Enterprises with distributed workforces or customers in markets beyond the U.S. and Korea will find the automation beta inapplicable for now, potentially slowing experimentation and consolidation around AI-driven mobile workflows. Observers expect Google to expand device support gradually, but each new handset family or OS variant introduces fresh integration challenges that may prolong the beta phase.

Implications for operators

- Organizations standardizing on Pixel 10/10 Pro or Galaxy S26 hardware in the U.S. and Korea will likely serve as initial test beds for cross-app automations, generating early data on assisted task completion rates and user intervention frequency.

- Security and compliance teams will want to assess Google’s isolation and logging guarantees as they determine whether sandboxed automations meet internal audit requirements for sensitive transactions.

- Product and QA groups may track partner app UI changes closely, since any deviation from expected interface elements could increase task failures or trigger fallback behaviors that expose edge-case risks.

- Platform strategists could view this beta as an incremental step toward a broader universal assistant vision, prompting comparative analyses of on-device versus cloud-hosted agent frameworks and influencing vendor evaluation roadmaps.

Signals to monitor

Key indicators of the feature’s trajectory include the pace at which Google expands device and regional availability, the number and diversity of third-party app integrations, and real-world telemetry on automation success versus error counts. Equally important will be any public disclosures of security audits or incident reports that test Google’s sandboxing claims. Finally, user and developer community feedback—particularly around cases of unintended automations or data exposures—will shape whether Gemini’s controlled orchestration model becomes a template for future AI assistants or remains a cautious experiment confined to flagship hardware.