Executive summary – what changed and why it matters

Indus embodies India’s pursuit of sovereign AI capabilities but reflects developmental immaturity compared with global incumbents.

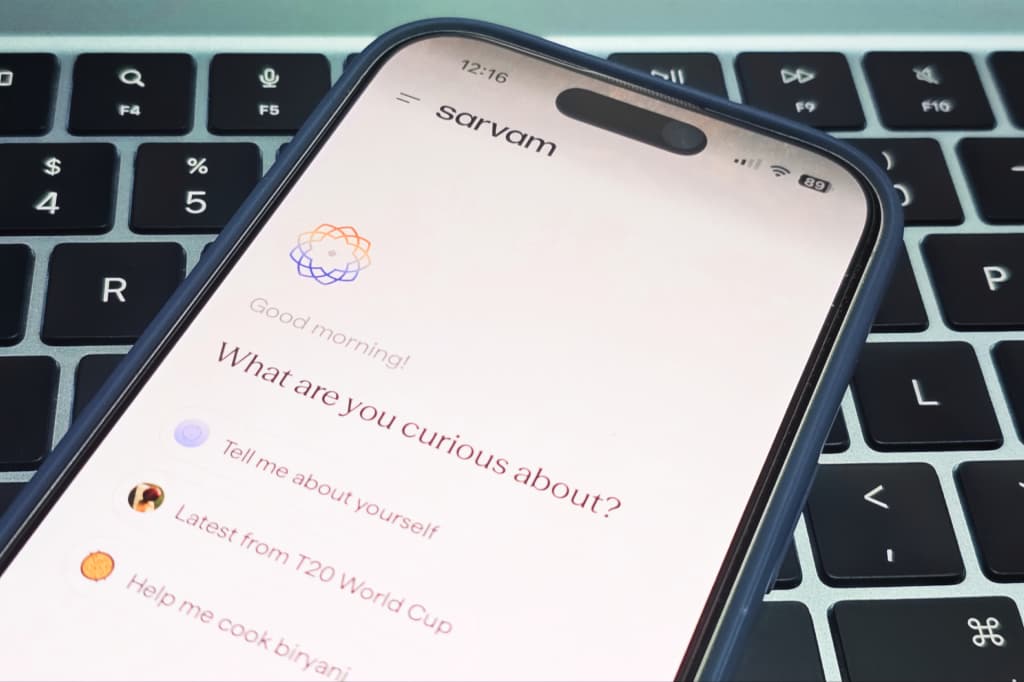

On February 20, 2026, Indian AI startup Sarvam released Indus—a web and mobile chat application powered by its newly unveiled Sarvam 105B model—in limited beta. By coupling a 105-billion-parameter LLM with multilingual text and voice interfaces, Indus positions itself as a domestically controlled alternative to global offerings in a market where generative AI engagement is surging.

- Platform scope: available in India-only beta on iOS, Android, and web; supports text and voice input/output, file uploads, document editing, and mid-chat language switching across major Indian languages.

- Model variants: Sarvam disclosed both a 105B flagship and a 30B variant; compute capacity is being scaled gradually, leading to potential access queues.

- Known beta limitations: absence of granular chat deletion controls, a non-toggleable reasoning mode that can introduce latency, and phased user onboarding tied to infrastructure expansion.

Key takeaways for executives

- Structural insight: Indus offers a domestic proof-point for AI sovereignty built around data locality and Indic language coverage, but it remains nascent in operational maturity relative to frontier-scale incumbents.

- Stakeholder trade-offs: early adopters will encounter capacity constraints, basic user controls, and unverified performance benchmarks, requiring attention to service-level expectations and compliance evidence.

- Market timing: the launch coincides with accelerated generative AI adoption in India and intensifying scrutiny over data governance; local players have a window to establish footholds but face robust competition from global platforms.

- Investment and scale: backed by $41 million in funding and partnerships under IndiaAI Mission, Sarvam’s runway underscores political support for sovereign AI but does not eliminate execution risks around compute scaling and enterprise readiness.

Breaking down the announcement

Indus delivers a chat interface underpinned by the Sarvam 105B parameter model, now accessible in limited beta across mobile and web. Key functionalities include multilingual conversational support with mid-chat switching between English and major Indian languages, voice-to-text and text-to-speech modalities, and basic file handling for PDFs and images. Experimental AI agents within the app are intended to assist with tasks such as document summarization or Q&A, though their capabilities remain to be benchmarked.

Authentication relies on phone numbers or third-party identity providers (Google, Apple), reflecting a balance between usability and integration simplicity. Sarvam’s co-founders have highlighted a phased rollout strategy linked to compute availability, which may yield waitlists or throttled access as the company calibrates its infrastructure.

Enterprise and hardware partnerships announced at the India AI Impact Summit—most notably with HMD for feature-phone integration and Bosch for automotive applications—underscore Sarvam’s ambition to extend Indus beyond consumer chat. Yet these collaborations are at an exploratory stage, with integration pilots and performance evaluations pending public disclosure.

Why this matters now

India’s digital ecosystem is at a crossroads where generative AI adoption intersects with national priorities of data sovereignty, linguistic representation, and regulatory oversight. Global AI platforms have already attracted millions of Indian users, creating both dependency and calls for localized alternatives that align with domestic policy frameworks on data residency and content governance.

Indus addresses these human stakes by positioning itself as a sovereign solution: enterprises gain a vendor whose full-stack control spans model training, data storage, and interface delivery within Indian jurisdiction. For end users, the prospect of robust Indic language support speaks to identity affirmation and inclusivity in digital services. However, these socio-political advantages coexist with questions around technical readiness, ecosystem support, and long-term sustainability.

Competitive context and caveats

Sarvam’s 105B-parameter model is modest compared with frontier LLMs from OpenAI, Google, or Anthropic, which deploy models scaling into the hundreds of billions or trillions of parameters. While a smaller model footprint can yield cost and latency benefits in scenarios with constrained infrastructure, it may also correspond to lower performance on complex reasoning, nuanced instruction following, or multimodal integration—projections that remain speculative without head-to-head benchmarks.

Absent publicly released evaluations, stakeholders will look for standardized metrics—such as reasoning accuracy on MMLU-style tests, latency comparisons in Indic languages, or throughput under concurrent load—to triangulate Indus’s capabilities. Early assertions of “efficiency focus over frontier-scale accuracy” require empirical substantiation, especially as enterprises weigh trade-offs between speed, cost, and depth of understanding.

Moreover, features taken for granted in mature global chat apps—granular conversation management, fine-tuning controls for domain-specific use cases, and advanced safety filters—are either missing or in early stages in Indus. These gaps suggest a phased evolution, where foundational sovereignty is prioritized over feature breadth in the beta phase.

Risks and governance considerations

Capacity constraints pose tangible risks to service-level expectations. If user demand outstrips compute expansion, latency spikes and access waitlists could erode enterprise confidence. The non-toggleable reasoning mode, baked into the default user experience to enhance accuracy, has been flagged by Sarvam as a potential source of slower response times—an operational trade-off that may influence adoption in time-sensitive workflows.

User control limitations—such as the inability to delete individual chat threads—create data governance friction points. In privacy-sensitive sectors (financial services, healthcare, regulated manufacturing), the granularity of data deletion and retention policies is often a compliance prerequisite. While an India-only rollout mitigates certain cross-border concerns, full certification and third-party auditability remain pending disclosures.

Safety and moderation tooling also figure prominently in governance calculus. Established platforms offer audit logs, content review pipelines, and enterprise-grade contracts; Indus’s current safety infrastructure has yet to be delineated publicly, leaving open questions about its ability to detect hallucinations, flag harmful content, or enforce corporate policies at scale.

What to watch next

- Release of public benchmarking reports comparing Sarvam 105B to ChatGPT and Gemini across reasoning, language comprehension, and multimodal tasks—especially in major Indian languages.

- Announcements on compute infrastructure scaling, developer APIs, and detailed feature roadmaps that clarify timelines for enterprise-oriented controls such as custom fine-tuning and multi-tenant governance.

- Results from pilot integrations with HMD and Bosch, including performance data on low-resource hardware and automotive environments where real-time inference and offline operation may be critical.

- Emerging community feedback in developer forums, user groups, and industry conferences regarding multilingual fidelity, latency patterns, and overall usability of the beta experience.

Implications for stakeholders

Enterprises evaluating AI sovereignty

Enterprises prioritizing data locality and regulatory compliance will view Indus as a structural experiment in domestic AI sovereignty. The full-stack control over data pipelines offers a value proposition aligned with corporate governance mandates, though practical adoption will hinge on concrete SLA definitions, deletion policies, and demonstrable performance benchmarks across target tasks.

Product leaders in local markets

Product teams focusing on customer engagement and regional relevance may find Indus’s Indic language support compelling. Its mid-chat switching and voice modalities speak to inclusive design principles. Yet product roadmaps will need to account for feature differentials—such as absent conversation management controls and limited plugin ecosystems—that could delay deeper integration into sophisticated user journeys.

Investors and ecosystem partners

Investors tracking India’s AI landscape will interpret Sarvam’s funding and IndiaAI Mission endorsement as signals of political momentum behind sovereign AI. Partnership pilots in low-end hardware segments and automotive contexts suggest diversified go-to-market strategies. The timing of additional funding rounds and compute expansion milestones will serve as barometers for scaling feasibility and capital efficiency.

Regulators and compliance bodies

Regulatory agencies monitoring generative AI deployment will note Indus’s claim to jurisdictional alignment and data residency. However, the current beta’s limited user controls and unverified audit tooling imply that formal compliance certifications—such as ISO 27001 or sector-specific standards—are forthcoming rather than in place. Regulators may seek transparency reports, third-party audits, and retention-deletion policy disclosures to evaluate risk frameworks.

Civil society and user communities

Civic actors concerned with digital rights and language inclusion will assess Indus through the lens of linguistic equity and user agency. The emphasis on Indian-language capabilities has the potential to democratize access, yet the absence of community feedback channels and open-source components limits broader scrutiny. Civil society groups may call for participatory evaluations of bias mitigation, accessibility features, and content governance models.

Indus represents a milestone in India’s journey toward sovereign AI infrastructure, foregrounding data control and linguistic diversity while revealing the typical limitations of an early-stage offering. Its evolving feature set, performance profile, and governance posture will shape stakeholder assessments of whether a domestically governed LLM can rival or complement global incumbents in high-stakes enterprise and public sector contexts.