On February 25, 2026, Adobe introduced Quick Cut in its Firefly Video Editor (beta), marking a decisive step toward AI-driven timeline assembly and illustrating how automation is redefining editorial workflows.

Breaking down the capability

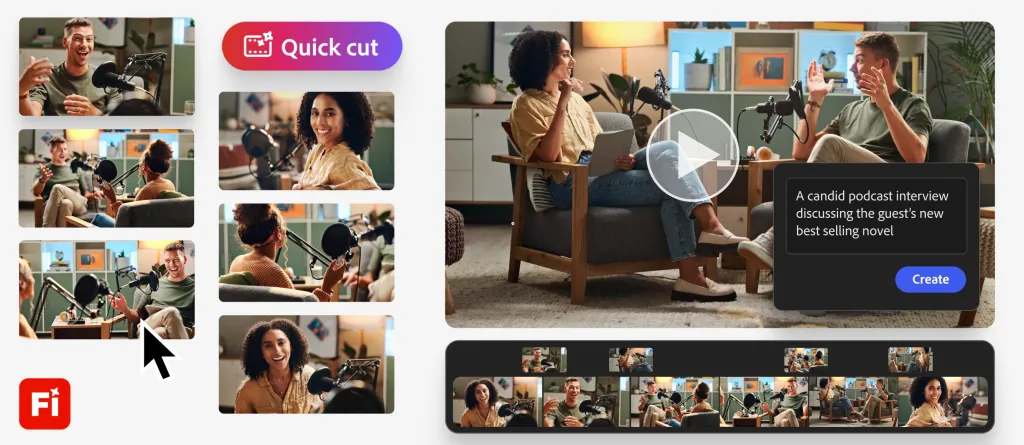

Quick Cut shifts editors’ time from selection to storytelling by automatically assembling a first-draft video timeline from uploaded clips, B-roll, images, and audio based on natural-language prompts.

Under the hood, Quick Cut combines scene detection, audio analysis, and “smart shot selection” to identify usable takes and remove pauses, false starts, or silent gaps. Users set pacing and aspect ratio, and can supply shot lists or script excerpts for greater editorial precision. Optional B-roll channels can be designated up front, and the resulting draft appears directly within Firefly’s editing canvas. Subsequent refinements—color grading, fine transitions, and transcript-driven caption edits—remain in the human domain.

Market context and positioning

This launch extends Adobe’s December 2025 updates to Firefly Video Editor, which added layered timelines and camera-motion prompts, by scaling from clip-level commands to full-project assembly. Competing platforms—Runway, Descript, and emerging AI tools—have showcased similar auto-editing ambitions, yet Adobe’s embedding of Quick Cut into Firefly and the broader Creative Cloud ecosystem could streamline license management, asset sharing, and collaboration for existing subscribers. That advantage, however, stands unverified in the absence of publicly available efficiency or cost benchmarks.

Adobe frames Quick Cut as optimized for fast-turnaround formats—product reviews, interviews, podcasts, and event recaps—where narrative-first assembly can accelerate time to draft. Nonetheless, the tool’s reliance on clear audio tracks, well-labeled footage, and descriptive prompts suggests that workflows lacking structured inputs may see diminishing returns.

Quantified and practical limits

No independent metrics have been published to confirm time savings, accuracy rates, or cost per finished minute. Early press coverage cites Adobe’s own claims, but without third-party validation these remain provisional. Anecdotally, clean transcripts and plentiful B-roll lead to smoother auto-assembly; by contrast, complex multi-camera shoots, layered soundscapes, or nuanced narrative arcs frequently require manual intervention to maintain editorial intent.

As a beta offering, Quick Cut enforces generation limits—currently tied to a promotional 2K-render allowance for Pro/Premium sign-ups through mid-March—which may obscure the true compute and licensing costs of broad deployment. Teams evaluating the tool should be prepared for variability in draft quality and unanticipated processing time on high-resolution projects.

Risks and governance considerations

- Accuracy risk: In multi-speaker interviews, AI might misselect speaker turns or reorder responses, potentially creating misleading sequences that distort intended context.

- Copyright and provenance: Automatically generated transitions or AI-sourced cut-bridges could introduce elements without clear licensing, necessitating metadata audits during post-production.

- Privacy and consent: Automated assembly may surface unscreened subjects or sensitive dialogue; without human review gates, organizations face potential compliance or reputational exposures.

- Cost variability: The beta’s promotional 2K limit and undisclosed post-beta pricing imply unpredictable compute and licensing expenses, which could affect budgeting for sustained use.

Organizational impacts

- Teams are likely to pilot Quick Cut on a small slate of projects—measuring time-to-first-draft and post-generation edit effort—to assess real savings and editorial quality.

- Where accuracy risk is high (for example, legal, journalistic, or compliance content), mandatory human checkpoints are likely to emerge as standard governance controls.

- Adoption among Creative Cloud subscribers with structured workflows may outpace that of teams handling unstructured or cinematic productions, due to integration convenience and defined input pipelines.

- Should performance metrics be validated, editorial hiring and skill development may tilt toward narrative strategy and creative finishing rather than manual selects and cuts.

What to watch next

Independent performance benchmarks, peer-reviewed case studies, and practitioner feedback in user forums will be critical to substantiating Quick Cut’s claimed efficiencies. Competitor responses from Runway, Descript, and other AI-edit platforms may prompt feature convergence or deeper enterprise governance offerings. Upcoming beta releases—particularly those addressing accuracy improvements, pricing transparency, and export-level audit logs—will determine whether editorial teams can credibly reallocate their efforts from manual selection tasks to higher-order storytelling and fine post-production.