Executive summary – what changed and why it matters

MIT Technology Review reported on March 4, 2026 that Anthropic’s large language model, Claude, is allegedly being used to assist U.S. strike planning by identifying and prioritizing targets related to Iran. If accurate, this marks a substantive shift: a commercial, general-purpose AI tool moving beyond analytical support into operational decision influence. This shift surfaces critical gaps in governance, traceability, and adherence to international legal norms at a pace driven by recurring Shahed drone incidents.

Key takeaways

- Substantive shift: A commercial LLM is reported to play a role in shaping kinetic target lists, rather than remaining in low-stakes analysis.

- High uncertainty: No public confirmation from Anthropic or the Pentagon; the model is described as “helping identify and prioritize” rather than executing autonomous strikes.

- Contextual pressure: Surging Shahed drone activity and urgent timelines are reported to incentivize rapid adoption of vendor AI over bespoke defense systems.

- Main risk areas: potential for model hallucinations, data classification leakage, lack of audit trails, and undefined human-in-the-loop controls.

- Broader trend: OpenAI’s pursuit of NATO contracts and other vendor initiatives suggest a vendor-agnostic turn toward commercial generative AI in defense.

Breaking down the report – capabilities, limits, and unknowns

According to MIT Technology Review, Claude is alleged to “identify” and “prioritize” targets. This phrasing implies an analytic-assist function—ranking potential launch sites or flagging points of interest—rather than issuing engagement directives. Three technical dimensions remain unverified publicly:

- Input data classification: It is unknown whether Claude is fed classified U.S. intelligence or limited to open-source information.

- Compute environment: Reports do not confirm whether the model runs in an isolated, air-gapped setting or on a multi-tenant cloud instance.

- Human oversight mechanisms: The nature and rigor of human-in-the-loop controls that review and approve model outputs are not documented.

While LLMs can synthesize text, imagery metadata, and signals reports at scale, they reportedly hallucinate—inventing details, misattributing sources, or omitting uncertainty metrics. In a military targeting context, these weaknesses could translate into misprioritized or erroneous target recommendations. Moreover, models trained on broad data corpora may inadvertently surface sensitive patterns or disclose input provenance under adversarial prompting.

Why this is happening now

Two converging dynamics appear to be driving the reported deployment. First, Iran’s frequent use of Shahed drones generates a high-volume targeting challenge: assessing numerous launch points across shifting frontlines. Second, procurement timelines for specialized defense AI often span years, while commercial LLMs are readily deployable via existing vendor channels. Industry observers note that OpenAI’s bids for NATO contracts and other vendor initiatives signal an emerging preference for commercial generative AI in military contexts.

Risks: legal, technical, and governance

International humanitarian law (IHL) mandates distinction and proportionality in targeting—principles that hinge on reliable, traceable evidence. LLM outputs lack intrinsic provenance metadata and cannot be certified like geospatial sensor outputs. Questions arise over recordkeeping: which entity logs model queries, and how is a chain of command documented when AI influences recommendations?

Technical risk scenarios include:

- Hallucination-induced errors that misidentify civilian structures as military assets.

- Dataset contamination or unintended memorization of classified inputs, raising data-leakage concerns.

- Cloud retention policies that could leave classified intelligence footprints on vendor servers.

- Immature audit and forensics capabilities, compared with established military-grade systems.

On governance, several gaps stand out. No public framework details how vendor models meet defense-grade security certifications. It is unclear whether existing arms-control and export-control regimes account for the cross-border deployment of commercial LLMs in targeting workflows.

Comparison to specialized alternatives

Specialized defense analytics commonly employ sensor fusion, Bayesian inference, and rule-based verification to produce probabilistic assessments with explicit provenance. These systems offer deterministic audit trails, enabling investigators to trace each data point through the targeting process. In contrast, commercial generative AI tools promise agility and rapid iteration, but they sacrifice the deterministic traceability that underpins legal and operational confidence in high-stakes decisions.

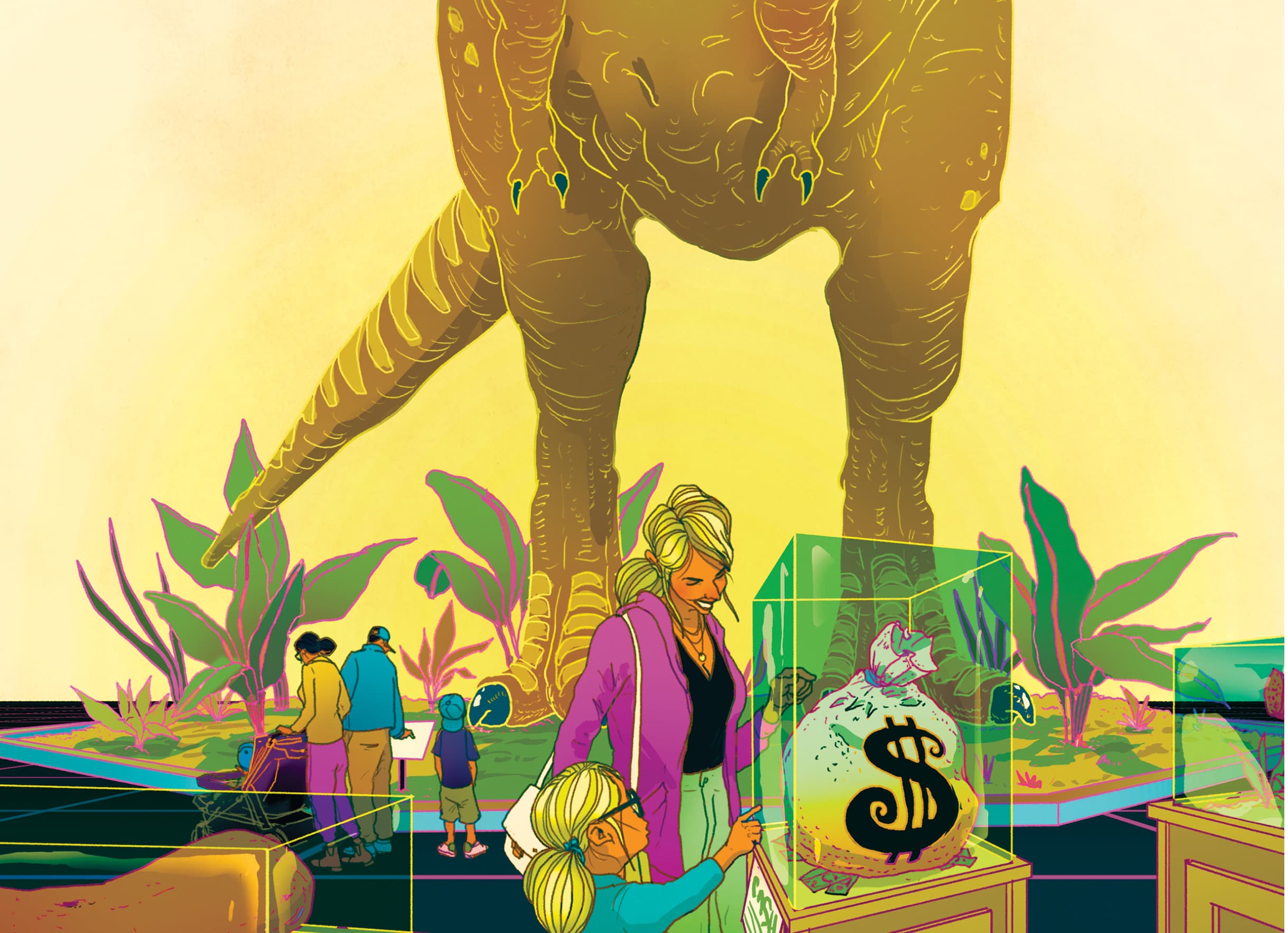

The vendor ecosystem is diversifying: Anthropic’s Claude, OpenAI’s models, and other entrants are all courting defense customers. This emerging landscape suggests that reliance on off-the-shelf generative AI is maturing from isolated experiments into institutional procurement strategies.

Governance gaps and policy options

The reported integration of commercial LLMs into strike planning reveals several policy options rather than definitive fixes. Stakeholders could consider:

- Establishing independent oversight bodies to review AI-assisted targeting protocols and verify compliance with IHL.

- Requiring model provenance frameworks that catalog data sources, compute environments, and query logs before any downstream operational use.

- Defining human-in-the-loop checkpoints with formal documentation of decisions, including rationale for accepting or rejecting AI-generated priorities.

- Mandating independent red-team or blue-team exercises to simulate high-risk scenarios—such as adversarial prompts that might trigger hallucinations or data leakage.

- Exploring regulatory frameworks that clarify how export controls and classification rules apply to commercial AI models used in defense supply chains.

These policy options highlight the governance void rather than prescribing specific operational steps. They underscore the urgent need for legal, acquisition, and oversight structures tailored to generative AI’s integration into military decision processes.

Conclusion

The reported use of a commercial LLM to assist U.S. strike planning represents more than a technological novelty—it signals a potential governance crisis in military AI procurement. As generative models transition from advisory roles into tactical influence, questions of auditability, accountability, and legal compliance become paramount. Whether this practice becomes institutionalized will depend on policymakers’ ability to close these gaps and establish transparent frameworks for the responsible deployment of commercial AI in warfare contexts.