Steerling-8B Makes Interpretability an Architectural Claim, But Validation Hangs on Annotation Quality

Guide Labs’ open-sourced Steerling-8B positions interpretability as a built-in feature rather than a post-hoc add-on by embedding a “concept layer” that traces every generated token to labeled training data. This architectural shift promises auditability and compliance controls, yet it rests on the quality of human and AI-driven annotations—and on external verification. No independent benchmarks or model card have been published, leaving the company-reported ~90% of frontier-model capability unverified.

- No independent benchmarks: Company-reported ~90% frontier capability pending external validation.

- Provenance fragility: Chain-of-custody claims depend on consistent, bias-free annotation; gaps could expose regulators to undisclosed data sources.

Breaking down the announcement

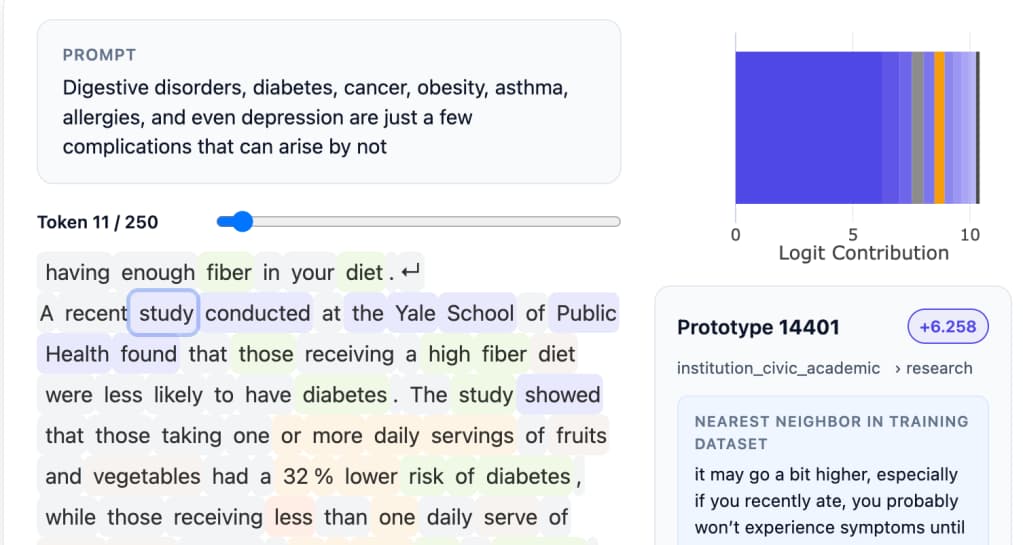

Steerling-8B’s core innovation is its concept layer, introduced during training. Instead of interpreting hidden weights after the fact (“neuroscience on a model”), Guide Labs pre-annotates training examples into conceptual categories and employs auxiliary AI models to scale those labels. At generation time, the model reports which concept buckets influenced each token, producing a token-by-token provenance trail.

Numbers and claims to note

- Model architecture: 8 billion parameters with an integrated concept layer; open-sourced Feb 23, 2026.

- Performance claim: Company-reported ~90% of frontier capability using less data (pending independent benchmarks and model card).

- Funding & origin: Emerged from Y Combinator; $9 million seed led by Initialized Capital.

- Roadmap: Plans for larger models, commercial API/agent access, and regulated-sector applications.

Why this matters now

Organizations in finance, healthcare, and other regulated industries face mounting pressure for AI systems that can demonstrate source accountability, selective content blocking, and privacy safeguards. If interpretability can be engineered without large performance trade-offs, enterprises could shift from black-box precision to architectures designed for governance and legal defensibility.

How it compares to alternatives

Existing interpretability approaches include post-hoc probes on large black-box models, retrieval-augmented generation with cited documents, and hybrid logging of prompts and responses. Steerling-8B departs by making provenance an architectural feature. That may reduce ambiguity compared to after-the-fact analysis, but it introduces new dependencies: annotation taxonomy design, the reliability of auxiliary annotators, and the risk that concept labels oversimplify emergent behaviors.

Risks and unanswered questions

- Validation gap: Absence of an independent model card or benchmarks leaves performance and traceability claims unverified.

- Annotation cost and bias: Up-front labeling can codify blind spots; auxiliary models may replicate or amplify those biases.

- Provenance integrity: Trace links could be incomplete or manipulated—regulators will seek end-to-end chain-of-custody assurances.

- Privacy and IP exposure: Detailed source mapping risks revealing private or copyrighted data unless redaction and licensing are rigorously managed.

Implications for operators

- Operators will likely pilot constrained deployments to assess concept-layer precision versus existing models.

- Teams may integrate annotation audits to monitor bias introduction before broad rollout.

- Governance leads will evaluate traceability workflows for compatibility with compliance frameworks.

Questions buyers will ask

- How will annotation guidelines and auxiliary-model architectures be documented in a public model card?

- What independent benchmarks will verify the ~90% frontier-capability claim?

- How does the concept taxonomy handle emerging or out-of-distribution concepts without manual relabeling?

- What mechanisms ensure chain-of-custody integrity when provenance trails are audited?

What to watch next

- Publication of an independent model card and third-party benchmarks against 8B and 70B baselines.

- Technical paper detailing concept-layer design, annotation workflows, and bias mitigation strategies.

- Early enterprise pilot reports that highlight real-world traceability successes or failures.

- Community reviews and forks that stress-test the concept taxonomy under diverse data regimes.