Executive summary – Claude Code’s voice mode pushes hands-free coding but leaves enterprise audit and privacy gaps

Anthropic’s recent rollout of voice mode in Claude Code introduces spoken commands as a native input channel for code generation and modification. Although presented as a stride toward hands-free, conversational development, the feature’s reliance on an engineer’s X post for details, absence of formal documentation, and ambiguity around data handling expose unresolved challenges in auditability, privacy safeguards, and transcription accuracy. This gap between marketed innovation and enterprise-grade governance defines the feature’s practical impact.

Key takeaways

- Feature exposure has been reported for a limited subset of users via a March 3 engineer announcement, with no corroborating blog or changelog.

- Transcription tokens are said to be exempt from quota limits and charges during the early phase, yet these practices lack an official statement.

- Voice mode availability is noted across paid tiers, though plan-specific conditions remain unconfirmed.

- The functionality arrives shortly after OpenAI enabled voice input for Codex, intensifying competition in voice-driven coding interfaces.

- Unclear voice data retention and lack of explicit audit logs raise concerns for teams in regulated sectors.

Announcement details and documentation gaps

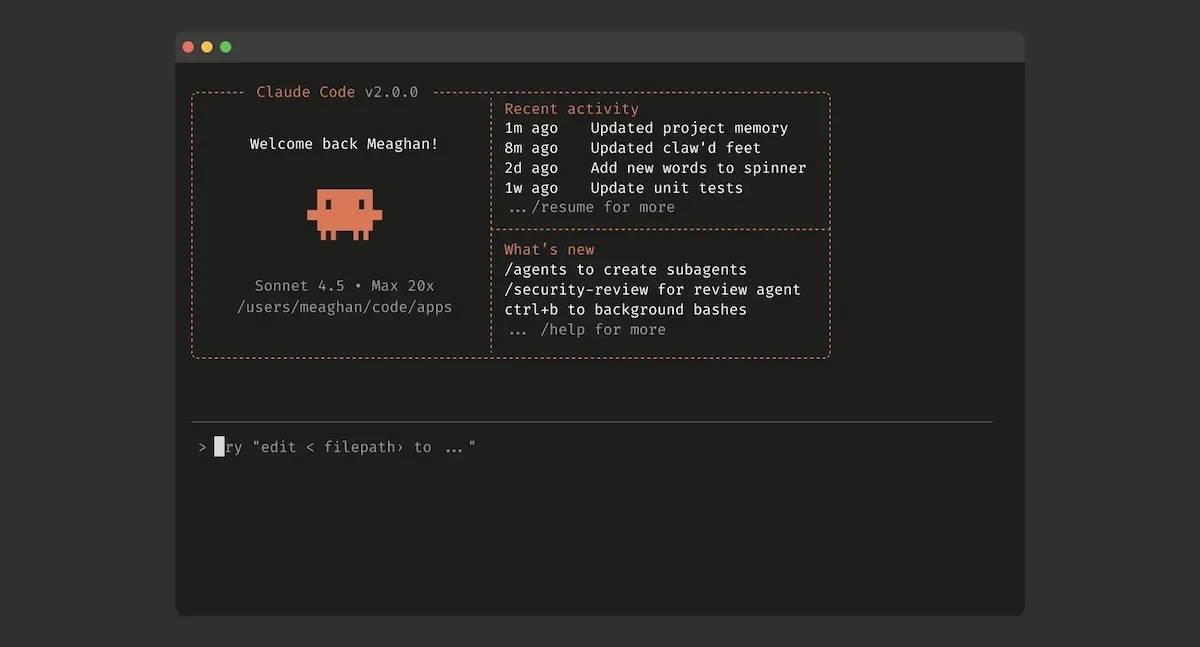

The voice mode feature was first described in an X post by an Anthropic engineer, outlining a /voice command in Claude Code’s CLI and a spacebar-hold interaction that streams spoken instructions to the model. According to the post, developers can issue directives such as “refactor the authentication middleware” without switching to keyboard input. Beyond the initial announcement, Anthropic has not published a formal release note, API documentation update, or security white paper to validate backend behavior, rate-limit policies, or data-handling protocols.

Press outlets including TechCrunch and CryptoRank have echoed the engineer’s description, but none have independently verified the feature’s underlying mechanisms—such as whether third-party transcription services are used, how voice streams are buffered, or if there are caps on interactive sessions. The reliance on a single social media declaration underscores the opacity of the rollout and highlights a recurring theme in AI tooling: feature announcements outpacing governance disclosures.

Market timing and strategic context

This launch unfolds against a backdrop of intensifying competition among AI-powered coding assistants. OpenAI’s Codex introduced a similar voice input scheme a week earlier, demonstrating the growing priority placed on natural language interfaces. Meanwhile, boutique solutions like Wispr Flow have focused on low-latency dictation integrated into popular IDEs but without deep AI orchestration. Anthropic’s decision to embed voice natively into Claude Code reflects a strategic pivot toward conversational multimodality, even as the company navigates questions about how voice workflows intersect with enterprise policies.

Beyond direct competitors, the broader AI ecosystem is exploring multimodal extensions that combine voice, vision, and code understanding. Anthropic’s voice mode announcement thus represents both a defensive response to rival capabilities and a signal of long-term product ambitions. Yet the absence of a detailed roadmap for feature maturity—covering transcription accuracy improvements, privacy guardrails, or compliance certifications—suggests that voice-driven coding remains an experimental frontier rather than an enterprise-ready solution.

Technical uncertainties in transcription fidelity

Voice-to-code workflows introduce unique challenges around accurately capturing programming constructs. Unlike natural language, code requires precise handling of symbols, indentation, and naming conventions. Early demonstrations of Claude Code voice mode have shown promise in renaming variables or generating boilerplate, but the error tolerance for more complex algorithmic instructions remains untested at scale.

Reportedly, transcription tokens do not count against typical API quotas and incur no immediate cost during the initial rollout. However, without verified benchmarks on error rates—such as misrecognized operators or misplaced whitespace—enterprise teams lack the data needed to evaluate risk. Even small transcription inaccuracies can manifest as subtle bugs, complicating debug workflows and potentially undermining trust in voice-based editing.

Governance frameworks and audit needs

The introduction of voice input amplifies existing governance considerations for AI assistants. When typed commands and generated code are already subject to logging and access controls, spoken interactions add an additional telemetry layer that must be captured, tracked, and secured. To date, there is no published specification from Anthropic detailing transcript retention policies, encryption standards, or role-based access mechanisms for voice logs.

In regulated industries—such as finance, healthcare, or government contracting—the ability to trace every request back to an authenticated user and to produce an immutable audit trail is critical. The absence of clear enterprise controls for voice interactions could create compliance blind spots, elevating legal and operational risk during incident investigations or external audits.

Operational implications and risks

- Symbol and syntax precision: Voice-to-code transcriptions must preserve punctuation, line breaks, and formatting. Inaccurate transcriptions risk injecting syntactic errors that are often harder to diagnose than typed mistakes.

- Data privacy exposure: Voice streams may contain proprietary information, secret keys, or PII. Without transparent policies on how audio is stored, processed, or purged, organizations face potential data leakage risks.

- Audit trail limitations: If transcripts are not tied to immutable logs or lack metadata linking them to specific code changes, teams may struggle to reconcile voice commands with resulting diffs—a challenge for regulated environments.

- Latency and usability trade-offs: Push-to-talk mechanisms reduce accidental input but introduce workflow friction if misfires or delays occur. Efficiency gains hinge on polished error-correction and undo/redo integrations.

Comparison with voice-coding alternatives

OpenAI’s Codex voice input, released on February 26, uses a comparable hold-to-talk interaction but has not publicly detailed enterprise controls or error-rate metrics. Early adopters of Codex voice have reported mixed results around transcription fidelity, suggesting that voice quality and network conditions can influence effectiveness. By contrast, Wispr Flow and similar plugins focus narrowly on capturing dictation in IDEs, without invoking code-aware AI models for interpretation.

Claude Code’s differentiator lies in its promise of model-level understanding: rather than pasting raw text, the assistant is expected to interpret developer intent and generate syntactically valid code. Yet so far, evidence of meaningful model-level gains—such as contextual awareness across multi-step refactors initiated by voice—is anecdotal. Without systematic head-to-head evaluations, claims of superiority over both Codex and niche dictation tools remain provisional.

Open questions for enterprise adoption

Several critical uncertainties stand between voice mode’s introduction and its maturation as a governance-safe enterprise feature. Organizations weighing the potential productivity uplift must contend with unanswered questions around rate-limit exemptions, transcript lifecycle management, and compliance assurances. The following diagnostic points highlight areas where further clarification is needed:

- What are the precise rate-limit policies for voice transcriptions beyond the reported exemption, and how will they change over time?

- How long are raw audio streams and text transcripts retained, and what encryption or access controls protect these records?

- Will Anthropic publish detailed error-rate statistics or confidence scores for transcribed code elements to inform risk assessments?

- How will voice interactions integrate with existing code review and audit workflows, including role-based permissions and immutable logging?

- Are there plans to pursue compliance certifications (e.g., SOC 2, ISO 27001) that explicitly cover voice-based AI interactions?

Conclusion

Anthropic’s voice mode for Claude Code represents a notable step toward more natural, hands-free developer experiences, aligning with broader trends in conversational AI. However, the feature’s reliance on an engineer’s social media post as its primary documentation source—and the absence of formal disclosures around data handling, auditing, and transcription accuracy—underscores an emerging tension in AI product releases. While voice-driven coding holds promise for streamlining simple refactors and boilerplate generation, its current incarnation leaves substantial governance and operational questions unanswered. For enterprise teams, the path to adoption will depend on whether Anthropic can close these gaps with transparent policies and verifiable performance metrics.